Apache Kafka系列文章

1、kafka(2.12-3.0.0)介绍、部署及验证、基准测试

2、java调用kafka api

3、kafka重要概念介紹及示例

4、kafka分区、副本介绍及示例

5、kafka监控工具Kafka-Eagle介绍及使用

本文主要介绍了kafka监控工具Kafka-Eagle的使用。

本文依赖:kafka、zookeeper部署完成。

本分分为三个部分介绍,即Kafka-Eagle介绍、部署和验证。

一、Kafka-Eagle简介

早期,要监控Kafka集群我们可以使用Kafka Monitor以及Kafka Manager,但随着监控的功能要求、性能要求的提高,这些工具已经无法满足。

Kafka Eagle是一款结合了目前大数据Kafka监控工具的特点,重新研发的一块开源免费的Kafka集群优秀的监控工具。它可以非常方便的监控生产环境中的offset、lag变化、partition分布、owner等。

官网地址:http://iyenn.com/index/link?url=https://www.kafka-eagle.org/

二、安装Kafka-Eagle

1、开启Kafka JMX端口

JMX(Java Management Extensions)是一个为应用程序植入管理功能的框架。JMX是一套标准的代理和服务,实际上,用户可以在任何Java应用程序中使用这些代理和服务实现管理。很多的一些软件都提供了JMX接口,来实现一些管理、监控功能。

- 开启Kafka JMX

在启动Kafka的脚本前,添加:

cd /usr/local/bigdata/kafka_2.12-3.0.0/bin

export JMX_PORT=9988; nohup kafka-server-start.sh /usr/local/bigdata/kafka_2.12-3.0.0/config/server.properties &

- 1

- 2

- 3

- 修改一键启动脚本,增加export JMX_PORT=9988 ,具体如下:

[alanchan@server1 onekeystart]$ cat kafkaCluster.sh

#!/bin/sh

case $1 in

"start"){

for host in server1 server2 server3

do

ssh $host "source /etc/profile;export JMX_PORT=9988; nohup ${KAFKA_HOME}/bin/kafka-server-start.sh ${KAFKA_HOME}/config/server.properties > /dev/null 2>&1 &"

echo "$host kafka is running..."

sleep 1.5s

done

};;

"stop"){

for host in server1 server2 server3

do

ssh $host "source /etc/profile; nohup ${KAFKA_HOME}/bin/kafka-server-stop.sh > /dev/null 2>&1 &"

echo "$host kafka is stopping..."

sleep 1.5s

done

};;

esac

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

2、安装Kafka-Eagle

1)、 安装JDK,并配置好JAVA_HOME。

2)、解压

将kafka_eagle上传,并解压到 /usr/local/bigdata 目录中

cd /usr/local/tools/

tar -xvzf kafka-eagle-bin-3.0.1.tar.gz -C /usr/local/bigdata

[alanchan@server1 tools]$ tar -xvzf kafka-eagle-bin-3.0.1.tar.gz -C /usr/local/bigdata

kafka-eagle-bin-3.0.1/

kafka-eagle-bin-3.0.1/efak-web-3.0.1-bin.tar.gz

cd /usr/local/bigdata/kafka-eagle-bin-3.0.1

tar -xvzf efak-web-3.0.1-bin.tar.gz

[alanchan@server1 kafka-eagle-bin-3.0.1]$ ll

总用量 87844

drwxr-xr-x 8 alanchan root 4096 1月 16 07:50 efak-web-3.0.1

-rw-r--r-- 1 alanchan root 89947836 9月 6 04:45 efak-web-3.0.1-bin.tar.gz

[alanchan@server1 kafka-eagle-bin-3.0.1]$ cd efak-web-3.0.1

[alanchan@server1 efak-web-3.0.1]$ ll

总用量 24

drwxr-xr-x 2 alanchan root 4096 1月 16 07:50 bin

drwxr-xr-x 2 alanchan root 4096 1月 16 07:50 conf

drwxr-xr-x 2 alanchan root 4096 9月 12 2021 db

drwxr-xr-x 2 alanchan root 4096 1月 16 07:50 font

drwxr-xr-x 9 alanchan root 4096 2月 23 2022 kms

drwxr-xr-x 2 alanchan root 4096 4月 1 2022 logs

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

3)、配置 kafka_eagle 环境变量。

vim /etc/profile

export KE_HOME=/usr/local/bigdata/kafka-eagle-bin-3.0.1/efak-web-3.0.1

export PATH=$PATH:$KE_HOME/bin

source /etc/profile

- 1

- 2

- 3

- 4

- 5

- 6

4)、 配置 kafka_eagle

使用vi打开conf目录下的system-config.properties

vim conf/system-config.properties

# 修改第4行,配置kafka集群别名

kafka.eagle.zk.cluster.alias=cluster1

# 修改第5行,配置ZK集群地址

efak.zk.cluster.alias=cluster1

cluster1.zk.list=server1:2118,server2:2118,server3:2118

# 注释第6行

#cluster2.zk.list=xdn10:2181,xdn11:2181,xdn12:2181

# 开启mys

efak.driver=com.mysql.cj.jdbc.Driver

efak.url=jdbc:mysql://192.168.10.44:3306/ke?useUnicode=true&characterEncoding=UTF-8&zeroDateTimeBehavior=convertToNull

efak.username=root

efak.password=888888

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

完整配置文件

######################################

# multi zookeeper & kafka cluster list

# Settings prefixed with 'kafka.eagle.' will be deprecated, use 'efak.' instead

######################################

efak.zk.cluster.alias=cluster1

cluster1.zk.list=server1:2118,server2:2118,server3:2118

#cluster2.zk.list=xdn10:2181,xdn11:2181,xdn12:2181

######################################

# zookeeper enable acl

######################################

cluster1.zk.acl.enable=false

cluster1.zk.acl.schema=digest

cluster1.zk.acl.username=test

cluster1.zk.acl.password=test123

######################################

# broker size online list

######################################

cluster1.efak.broker.size=20

######################################

# zk client thread limit

######################################

kafka.zk.limit.size=16

######################################

# EFAK webui port

######################################

efak.webui.port=8048

######################################

# EFAK enable distributed

######################################

efak.distributed.enable=false

efak.cluster.mode.status=master

efak.worknode.master.host=localhost

efak.worknode.port=8085

######################################

# kafka jmx acl and ssl authenticate

######################################

cluster1.efak.jmx.acl=false

cluster1.efak.jmx.user=keadmin

cluster1.efak.jmx.password=keadmin123

cluster1.efak.jmx.ssl=false

cluster1.efak.jmx.truststore.location=/data/ssl/certificates/kafka.truststore

cluster1.efak.jmx.truststore.password=ke123456

######################################

# kafka offset storage

######################################

cluster1.efak.offset.storage=kafka

cluster2.efak.offset.storage=zk

######################################

# kafka jmx uri

######################################

cluster1.efak.jmx.uri=service:jmx:rmi:///jndi/rmi://%s/jmxrmi

######################################

# kafka metrics, 15 days by default

######################################

efak.metrics.charts=true

efak.metrics.retain=15

######################################

# kafka sql topic records max

######################################

efak.sql.topic.records.max=5000

efak.sql.topic.preview.records.max=10

######################################

# delete kafka topic token

######################################

efak.topic.token=keadmin

######################################

# kafka sasl authenticate

######################################

cluster1.efak.sasl.enable=false

cluster1.efak.sasl.protocol=SASL_PLAINTEXT

cluster1.efak.sasl.mechanism=SCRAM-SHA-256

cluster1.efak.sasl.jaas.config=org.apache.kafka.common.security.scram.ScramLoginModule required username="kafka" password="kafka-eagle";

cluster1.efak.sasl.client.id=

cluster1.efak.blacklist.topics=

cluster1.efak.sasl.cgroup.enable=false

cluster1.efak.sasl.cgroup.topics=

cluster2.efak.sasl.enable=false

cluster2.efak.sasl.protocol=SASL_PLAINTEXT

cluster2.efak.sasl.mechanism=PLAIN

cluster2.efak.sasl.jaas.config=org.apache.kafka.common.security.plain.PlainLoginModule required username="kafka" password="kafka-eagle";

cluster2.efak.sasl.client.id=

cluster2.efak.blacklist.topics=

cluster2.efak.sasl.cgroup.enable=false

cluster2.efak.sasl.cgroup.topics=

######################################

# kafka ssl authenticate

######################################

cluster3.efak.ssl.enable=false

cluster3.efak.ssl.protocol=SSL

cluster3.efak.ssl.truststore.location=

cluster3.efak.ssl.truststore.password=

cluster3.efak.ssl.keystore.location=

cluster3.efak.ssl.keystore.password=

cluster3.efak.ssl.key.password=

cluster3.efak.ssl.endpoint.identification.algorithm=https

cluster3.efak.blacklist.topics=

cluster3.efak.ssl.cgroup.enable=false

cluster3.efak.ssl.cgroup.topics=

######################################

# kafka sqlite jdbc driver address

######################################

#efak.driver=org.sqlite.JDBC

#efak.url=jdbc:sqlite:/hadoop/kafka-eagle/db/ke.db

#efak.username=root

#efak.password=www.kafka-eagle.org

######################################

# kafka mysql jdbc driver address

######################################

efak.driver=com.mysql.cj.jdbc.Driver

efak.url=jdbc:mysql://192.168.10.44:3306/ke?useUnicode=true&characterEncoding=UTF-8&zeroDateTimeBehavior=convertToNull

efak.username=root

efak.password=888888

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

- 29

- 30

- 31

- 32

- 33

- 34

- 35

- 36

- 37

- 38

- 39

- 40

- 41

- 42

- 43

- 44

- 45

- 46

- 47

- 48

- 49

- 50

- 51

- 52

- 53

- 54

- 55

- 56

- 57

- 58

- 59

- 60

- 61

- 62

- 63

- 64

- 65

- 66

- 67

- 68

- 69

- 70

- 71

- 72

- 73

- 74

- 75

- 76

- 77

- 78

- 79

- 80

- 81

- 82

- 83

- 84

- 85

- 86

- 87

- 88

- 89

- 90

- 91

- 92

- 93

- 94

- 95

- 96

- 97

- 98

- 99

- 100

- 101

- 102

- 103

- 104

- 105

- 106

- 107

- 108

- 109

- 110

- 111

- 112

- 113

- 114

- 115

- 116

- 117

- 118

- 119

- 120

- 121

- 122

- 123

- 124

- 125

- 126

- 127

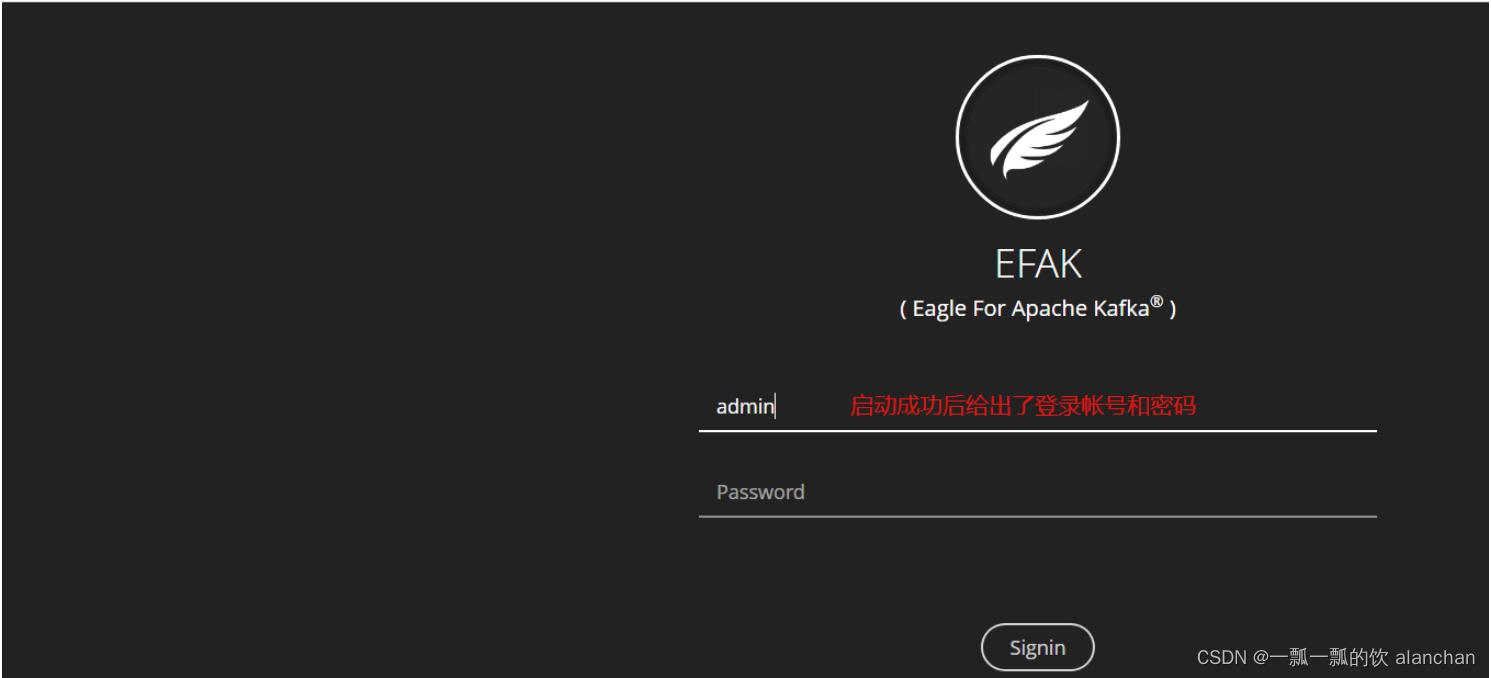

5)、启动

[alanchan@server1 bin]$ ke.sh

Usage: ./ke.sh {start|stop|restart|status|stats|find|gc|jdk|version|sdate|cluster}

ke.sh start

[2023-01-16 08:05:35] INFO: Port Progress: [##################################################] | 100%

[2023-01-16 08:05:38] INFO: Config Progress: [##################################################] | 100%

[2023-01-16 08:05:41] INFO: Startup Progress: [##################################################] | 100%

[2023-01-16 08:05:31] INFO: Status Code[0]

[2023-01-16 08:05:31] INFO: [Job done!]

Welcome to

______ ______ ___ __ __

/ ____/ / ____/ / | / //_/

/ __/ / /_ / /| | / ,<

/ /___ / __/ / ___ | / /| |

/_____/ /_/ /_/ |_|/_/ |_|

( Eagle For Apache Kafka® )

Version v3.0.1 -- Copyright 2016-2022

*******************************************************************

* EFAK Service has started success.

* Welcome, Now you can visit 'http://192.168.10.41:8048'

* Account:admin ,Password:123456

*******************************************************************

* <Usage> ke.sh [start|status|stop|restart|stats] </Usage>

* <Usage> http://iyenn.com/index/link?url=https://www.kafka-eagle.org/ </Usage>

*******************************************************************

自己创建的用户alanchan,密码123456

- 1

- 2

- 3

- 4

- 5

- 6

- 7

- 8

- 9

- 10

- 11

- 12

- 13

- 14

- 15

- 16

- 17

- 18

- 19

- 20

- 21

- 22

- 23

- 24

- 25

- 26

- 27

- 28

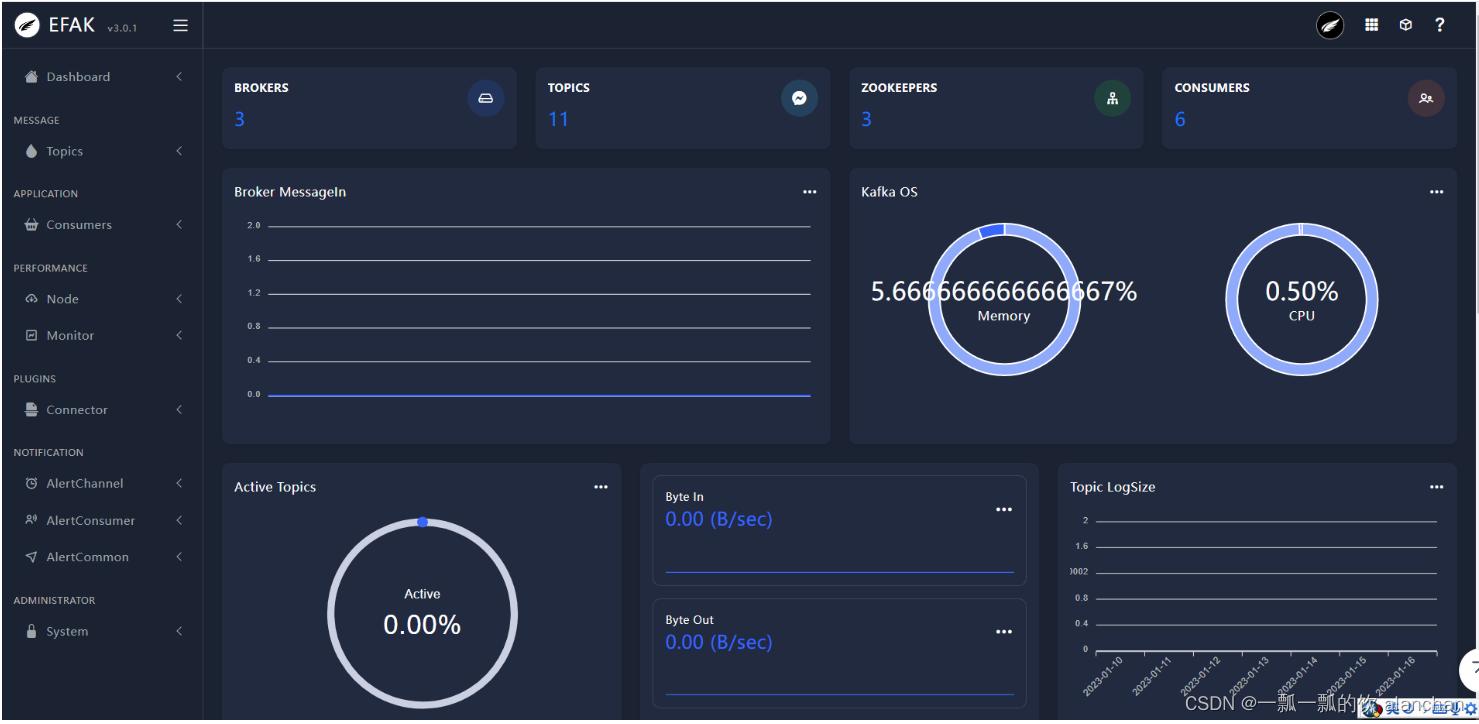

三、验证

浏览器中输入地址:http://192.168.10.41:8048

以上,完成了kafka监控工具Kafka-Eagle介绍及使用。

评论记录:

回复评论: